Mellanox QM8700-F / MQM8700-HS2F, 1U high-density HDR InfiniBand switch, used for HPC/AI clusters

안심하세요. 반품 가능합니다.

배송: 국제 배송 시 통관 절차 및 추가 요금이 발생할 수 있습니다. 자세히 보기

배송 기간: 국제 배송이 통관 절차의 영향을 받을 경우 추가 시간이 소요될 수 있습니다. 자세히 보기

반품: 14일 이내 반품 가능합니다. 판매자가 반품 배송비를 부담합니다. 자세히 보기

무료 배송. NET 30 Days 구매 주문을 받습니다. 신용에 영향을 주지 않고 몇 초 만에 승인받으세요.

대량 구매가 필요하신 QM8700-F / MQM8700-HS2F 제품은 Whatsapp: (+86) 151-0113-5020 무료 전화로 문의하시거나 라이브 채팅에서 견적을 요청해 주시면 영업 담당자가 곧 연락드립니다.

Mellanox QM8700-F / MQM8700-HS2F 1U High-Density HDR InfiniBand Switch - Ultimate Network Hardware for HPC & AI Clusters

Title

Mellanox QM8700-F / MQM8700-HS2F 1U 40-Port HDR 200Gb/s InfiniBand Switch with 16Tb/s Bandwidth & SHARP In-Network Computing - Ideal Network Hardware for HPC Clusters, AI Training & High-Density Data Centers

Keywords

mellanox switch,qm8700-f,mqm8700-hs2f,infiniband switch,hdr infiniband,1u switch,high-density switch,hpc cluster,ai cluster,network hardware,buy infiniband switch,server network switch,200gb switch,quantum switch

Description

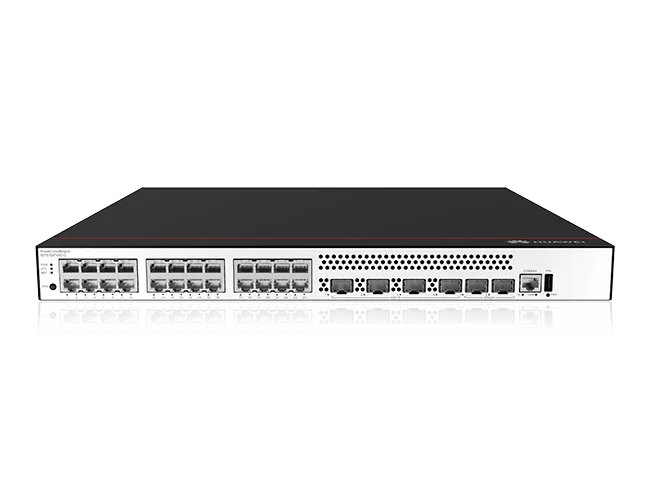

In the fast-evolving world of high-performance computing and artificial intelligence, having robust, low-latency network hardware is non-negotiable. The Mellanox QM8700-F and MQM8700-HS2F stand as flagship InfiniBand switch models, purpose-built for organizations looking to buy InfiniBand switch solutions that power the most demanding HPC cluster and AI cluster environments. As a 1U high-density platform, this HDR InfiniBand switch redefines what’s possible in data center networking.

At the core of the QM8700-F / MQM8700-HS2F lies the NVIDIA Quantum chip, delivering an astonishing 16Tb/s of non-blocking bandwidth and sub-130ns port-to-port latency. This 1U switch supports 40 ports of HDR 200Gb/s InfiniBand, or 80 ports of HDR100 100Gb/s with ConnectX-6 adapters, making it the ultimate high-density switch for modern data centers. For AI model training and large-scale HPC simulations, this 200Gb switch eliminates network bottlenecks, ensuring data flows at wire speed.

What sets this Mellanox switch apart is its integrated SHARP (Scalable Hierarchical Aggregation and Reduction Protocol) technology, enabling advanced in-network computing. By offloading collective communication tasks from CPUs to the switch fabric, it accelerates AI and HPC applications by orders of magnitude—a critical advantage for AI cluster workloads like distributed deep learning. Whether deployed as a leaf or spine switch, it optimizes traffic routing for SlimFly and Dragonfly+ topologies.

Designed for reliability and ease of management, the MQM8700-HS2F features hot-swappable redundant power supplies and fans, plus P2C airflow for standard data center cooling. Managed via Mellanox’s UFM (Unified Fabric Management) platform, it provides real-time telemetry, AI-driven analytics, and self-healing capabilities that recover from link failures 5,000x faster than software solutions. This ensures maximum uptime for mission-critical HPC cluster and AI cluster operations.

Key Features

- 1U rack-mount form factor with high port density for space-optimized data centers

- 40 QSFP56 ports supporting HDR 200Gb/s InfiniBand; up to 80 HDR100 100Gb/s ports with ConnectX-6

- 16Tb/s non-blocking switching capacity with sub-130ns ultra-low latency

- Integrated SHARP in-network computing to accelerate AI/HPC collective operations

- Adaptive routing for SlimFly, Dragonfly+, and 6DT advanced network topologies

- Hot-swappable 1+1 redundant AC power supplies (100-240V) and 5+1 redundant fans

- P2C (port-to-chassis) airflow for standard data center cooling environments

- x86 dual-core management CPU with 8GB system memory for intelligent fabric control

- UFM management platform with real-time telemetry, AI analytics, and self-healing networking

- Optimized for HPC clusters, AI training, big data, and hyperscale cloud infrastructures

Configuration

| Component | Specification |

|---|---|

| Part Number | QM8700-F, MQM8700-HS2F |

| Form Factor | 1U Rack Mount InfiniBand Switch |

| Switch Chip | NVIDIA Quantum (HDR InfiniBand) |

| Port Configuration | 40 x QSFP56 (200Gb/s HDR) / 80 x HDR100 (100Gb/s) |

| Switching Capacity | 16 Tb/s (non-blocking) |

| Latency | Sub-130ns (port-to-port) |

| Management CPU | x86 ComEx Broadwell D-1508 (Dual-Core) |

| System Memory | 8 GB DDR4 |

| Power Supplies | 1+1 Hot-swappable AC (100-240V, 50/60Hz) |

| Fans | 5+1 Hot-swappable (N+1 Redundancy) |

| Airflow | P2C (Port-to-Chassis, Standard Depth) |

| Typical Power | 253W (Max: 784W) |

| Operating Temp | 0°C to 40°C (32°F to 104°F) |

Compatibility

The Mellanox QM8700-F / MQM8700-HS2F is fully compatible with standard 19-inch server rack enclosures and rack rails, making integration seamless in existing data centers. It interoperates exclusively with Mellanox (NVIDIA) ConnectX-6 InfiniBand adapters, supporting both HDR 200Gb/s and HDR100 100Gb/s speeds for maximum flexibility.

This InfiniBand switch is validated for HPC and AI cluster environments, supporting popular topologies like SlimFly, Dragonfly+, and 6DT. It works with leading HPC middleware and AI frameworks, including MPI, PyTorch, TensorFlow, and Horovod, ensuring compatibility with your existing software stack. The switch runs on MLNX-OS, Mellanox’s purpose-built operating system for InfiniBand fabrics.

Management compatibility includes full support for Mellanox UFM (Unified Fabric Management) software, enabling centralized control of multiple switches and end hosts. It also supports out-of-band management via Ethernet and in-band management over InfiniBand, providing flexible options for network administrators. The Mellanox switch is RoHS-compliant and certified for safety/EMC standards (CE/FCC), ensuring global deployment readiness.

Usage Scenarios

The Mellanox QM8700-F / MQM8700-HS2F is the gold standard for HPC cluster deployments, powering scientific computing, weather modeling, and finite element analysis workloads. Its 16Tb/s bandwidth and sub-130ns latency eliminate network bottlenecks, ensuring fast data exchange between compute nodes. Organizations can buy InfiniBand switch hardware to build clusters that scale to thousands of nodes with consistent performance.

For AI cluster environments, this 1U switch is indispensable for distributed deep learning training. The integrated SHARP technology optimizes collective communication operations like all-reduce, which are critical for training large AI models. Whether training computer vision, natural language processing, or generative AI models, the switch accelerates training times by reducing communication overhead between GPU servers.

hyperscale cloud and big data infrastructures benefit greatly from the high-density switch design. The 40-port HDR configuration enables efficient leaf-spine architectures, connecting thousands of servers with high-speed 200Gb/s links. It’s ideal for big data processing frameworks like Spark and Hadoop, where fast data movement between nodes is essential for performance. The switch’s low power consumption (253W typical) also reduces operational costs in large-scale deployments.

As a high-performance server network switch, it excels in storage-intensive environments, connecting NVMe storage arrays to compute nodes with 200Gb/s InfiniBand. This enables low-latency, high-bandwidth access to storage, critical for database, data warehousing, and content delivery workloads. The switch’s reliability and redundancy features ensure data integrity and availability for mission-critical applications.

Frequently Asked Questions

Q1: What is the main difference between QM8700-F and MQM8700-HS2F?

A: The MQM8700-HS2F is a pre-configured model with 2 AC power supplies, P2C airflow, and a rail kit, while the QM8700-F is the base model (same hardware, different bundle).

Q2: What makes this switch ideal for AI clusters?

A: It features **SHARP in-network computing**, which offloads collective communication tasks (e.g., all-reduce) from CPUs/GPUs to the switch, accelerating AI training by up to 10x compared to traditional Ethernet switches.

Q3: Can it be used in both leaf and spine roles in a data center?

A: Absolutely. The InfiniBand switch supports advanced adaptive routing for SlimFly and Dragonfly+ topologies, making it suitable for both leaf (server-facing) and spine (inter-leaf) roles in high-density fabrics.

Q4: What is the maximum number of servers it can connect in an HPC cluster?

A: With 40 HDR 200Gb/s ports, it can connect up to 40 GPU servers directly as a leaf switch. Using HDR100 mode (80 ports), it doubles capacity, supporting up to 80 servers per switch.

이 상품과 관련된 제품

-

Mellanox QM8700-F / MQM8700-HS2F, 1U high-density ... - 부품 번호: QM8700-F / MQM8700-H...

- 재고 상태:In Stock

- 상태:중고

- 기존 가격:$15,862.00

- 현재 가격: $14,705.00

- 절약액 $1,157.00

- 지금 채팅하기 이메일 전송

-

DELL DS-7730B 고밀도 128포트 파이버 채널 스위치(32G SFP+ 및 64G ... - 부품 번호: DELL DS-7730B...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$197,143.00

- 현재 가격: $167,999.00

- 절약액 $29,144.00

- 지금 채팅하기 이메일 전송

-

Brocade BR-G730-128 고밀도 128포트 파이버 채널 스위치(32G SFP+ ... - 부품 번호: Brocade BR-G730-128...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$189,143.00

- 현재 가격: $167,143.00

- 절약액 $22,000.00

- 지금 채팅하기 이메일 전송

-

Cisco Catalyst C9500-48Y4C 48- 포트 SFP+ GLC-TE 모듈이있... - 부품 번호: C9500-48Y4C...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$11,999.00

- 현재 가격: $10,286.00

- 절약액 $1,713.00

- 지금 채팅하기 이메일 전송

-

G630-96-32G-R 72-PORT 32GB SAN 스위치와 엔터프라이즈 라이센스 및 ... - 부품 번호: G630-96-32G-R...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$52,999.00

- 현재 가격: $51,429.00

- 절약액 $1,570.00

- 지금 채팅하기 이메일 전송

-

브로케이드 BR-G720-56-64G-R-64GBPS 56 포트 섬유 채널 스위치... - 부품 번호: BR-G720-56-64G-R...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$45,999.00

- 현재 가격: $45,200.00

- 절약액 $799.00

- 지금 채팅하기 이메일 전송

-

LIS-MSRB-IPS-3Y 3 년 MSR3610-I-DP+DDR4-32GB에 대한 승인 ... - 부품 번호: LIS-F1000-AV-3Y...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$599.00

- 현재 가격: $549.00

- 절약액 $50.00

- 지금 채팅하기 이메일 전송

-

LIS-MSRB-IPS-3Y 3 년 MSR3610-I-DP+DDR4-32GB에 대한 승인 ... - 부품 번호: LIS-MSRB-IPS-3Y...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$999.00

- 현재 가격: $899.00

- 절약액 $100.00

- 지금 채팅하기 이메일 전송