대량 구매가 필요하신 Inspur KR6288X2-A0 제품은 Whatsapp: (+86) 151-0113-5020 무료 전화로 문의하시거나 라이브 채팅에서 견적을 요청해 주시면 영업 담당자가 곧 연락드립니다.

Inspur KR6288X2-A0 AI Server | 8x NVIDIA HGX H200 | Dual Intel Xeon 8558P | 2TB DDR5

Keywords

Inspur KR6288X2-A0, NVIDIA HGX H200, Intel Xeon 8558P, 2TB DDR5 RAM, AI Training Server, Generative AI, HPC Server, Buy Inspur ServerDescription

Step into the future of hyperscale artificial intelligence with the Inspur KR6288X2-A0. This flagship AI server is engineered to train the world's most complex Large Language Models (LLMs), featuring the brand new NVIDIA HGX H200 8-GPU architecture. With a massive combined 1128GB of HBM3e memory across the HGX baseboard, this system shatters previous memory bottlenecks, allowing data scientists to run massive parameter models efficiently without requiring as many interconnected nodes.

At the heart of this compute giant are dual Intel Xeon 8558P processors. Each CPU boasts 48 cores, 260M cache, and a 2.7GHz base clock operating at a 350W TDP. This provides 96 total physical cores of premium x86 orchestration power to prepare data and manage the immense GPU workload. To keep the processing pipeline completely saturated, the system is populated with 32x 64GB DDR5-5600MHz ECC-RDIMMs, totaling a massive 2TB of ultra-fast system memory.

Storage is tiered for both reliability and extreme speed. The host operating system is secured on two 480GB SATA 6Gbps 2.5-inch Read Intensive SSDs. Meanwhile, training data and checkpoints are handled by two 3.84TB U.2 16GTps 2.5-inch NVMe solid-state drives, ensuring rapid data ingestion directly to the GPUs. Powering this immense hardware is a robust redundant power array featuring Titanium-rated high-efficiency PSUs (supporting 220VAC or 240VDC). With a comprehensive 3-year warranty, this server is a secure investment for enterprise data centers pushing the boundaries of AI Training Server capabilities.

Key Features

- Next-Gen AI Acceleration: 1x Nvidia HGX-200-8GPU board delivering an unprecedented 1128GB of VRAM.

- Elite Processing: 2x Intel Xeon 8558P processors (48 Cores, 2.7GHz, 260M Cache, 350W).

- Massive Memory Bandwidth: 2TB Total System RAM configured via 32x 64GB DDR5-5600MHz ECC-RDIMMs.

- High-Speed Data Tier: 2x 3.84TB U.2 NVMe SSDs (16GTps) for rapid checkpointing and data ingestion.

- Reliable OS Boot: 2x 480GB SATA 6Gbps 2.5" SSDs.

- Titanium Efficiency: Equipped with ultra-efficient Titanium power supplies (3200W/2700W 220VAC/240VDC configuration).

- Enterprise Guarantee: Backed by a 3-Year Warranty.

Configuration

| Component | Specification | Quantity |

|---|---|---|

| Brand / Model | Inspur KR6288X2-A0 (H200 Complete Machine) | 1 |

| Processor (CPU) | Intel 8558P Xeon 2.7GHz 48C 260M 350W | 2 |

| Memory (RAM) | 64G DDR5-5600MHz ECC-RDIMM | 32 |

| System Disk | 480G SATA 6Gbps 2.5in Read | 2 |

| Data Disk | 3.84T U.2 16GTps 2.5in R-Standard | 2 |

| GPU Baseboard | Nvidia HGX-200-8GPU 1128G | 1 |

| Power Supply | 3200W / 2700W Titanium 220VAC or 240VDC | 1 |

| Warranty | 3 Years | 1 |

Compatibility

The Inspur KR6288X2-A0 is a premier platform designed for the NVIDIA AI Enterprise software stack. It natively supports the latest deep learning frameworks such as PyTorch, TensorFlow, and JAX. Operating system compatibility includes enterprise standards such as Ubuntu Server 22.04 LTS and Red Hat Enterprise Linux (RHEL) 9. The HGX H200 architecture utilizes NVLink interconnects internally and is designed to interface with high-speed NDR InfiniBand networking cards for massive cluster scaling.

Usage Scenarios

This server is specifically architected for Foundation Model Training. The 1128GB of total VRAM across the 8-GPU baseboard allows data scientists to load incredibly large LLMs directly into memory, enabling massive batch sizes and significantly cutting down training times for generative AI models.

It also serves as a dominant High-Throughput Inference Node. For customer-facing Generative AI applications requiring real-time text, image, or video generation, the sheer memory bandwidth of the H200 GPUs ensures multiple concurrent user requests are served with minimal latency.

Frequently Asked Questions

Q: What is the primary difference between an HGX H100 and this HGX H200 system?

A: The primary upgrade is memory capacity and bandwidth. While a standard 8-GPU H100 system features 640GB of memory, the Nvidia HGX-200-8GPU featured here includes 1128GB of faster HBM3e memory (approx. 141GB per GPU). This allows significantly larger models to run on a single node without encountering memory bottlenecks.

Q: Are the NVMe drives configured for redundancy?

A: The system includes two 3.84T U.2 NVMe drives. Typically in an AI training environment, these are configured in a RAID 0 stripe for maximum read/write performance to feed data to the GPUs as fast as possible, though they can be configured in RAID 1 if data redundancy is prioritized over speed.

이 상품과 관련된 제품

-

Dell PowerEdge R740XD2 2U High Density Storage Ser... - 부품 번호: Dell PowerEdge R740X...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$28,999.00

- 현재 가격: $27,999.00

- 절약액 $1,000.00

- 지금 채팅하기 이메일 전송

-

Lenovo WR5480G3/ 2*Cooling Fans/ 2-Port 10G Modul... - 부품 번호: WR5480G3...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$45,888.00

- 현재 가격: $44,999.00

- 절약액 $889.00

- 지금 채팅하기 이메일 전송

-

Lenovo ThinkSystem HR650X 서버 2x 제온 실버 4214 64GB RA... - 부품 번호: ThinkSystem HR650X...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$4,999.00

- 현재 가격: $4,599.00

- 절약액 $400.00

- 지금 채팅하기 이메일 전송

-

미국 코로케이션 베어 메탈 배포용 NVIDIA H200 141GB SXM5 GPU 8개가 ... - 부품 번호: SR680AV3...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$329,999.00

- 현재 가격: $328,999.00

- 절약액 $1,000.00

- 지금 채팅하기 이메일 전송

-

ThinkSystem SR665V3 2U 랙 서버 24베이 2.5인치 엔터프라이즈 서버... - 부품 번호: SR665V3...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$5,999.00

- 현재 가격: $5,599.00

- 절약액 $400.00

- 지금 채팅하기 이메일 전송

-

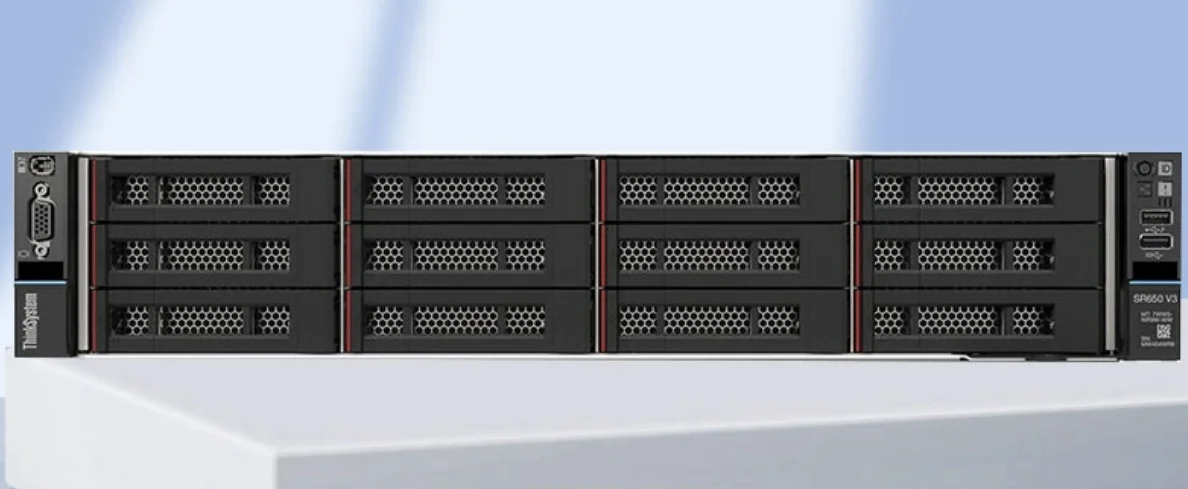

Lenovo ThinkSystem SR650 V3 2U 랙 서버 - 듀얼 Xeon Gold... - 부품 번호: Lenovo ThinkSystem S...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$13,199.00

- 현재 가격: $12,999.00

- 절약액 $200.00

- 지금 채팅하기 이메일 전송

-

Dell PowerEdge R760xs 16세대 2U 랙 서버 - Dual Xeon Gol... - 부품 번호: R760xs...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$14,199.00

- 현재 가격: $13,999.00

- 절약액 $200.00

- 지금 채팅하기 이메일 전송

-

HPE ProLiant 서버 DL-360-G11 – 랙(SFF) 2*6544Y/3*300G... - 부품 번호: DL360 Gen11...

- 재고 상태:In Stock

- 상태:새 상품

- 기존 가격:$23,888.00

- 현재 가격: $21,666.00

- 절약액 $2,222.00

- 지금 채팅하기 이메일 전송